How I produced a 25-page research report in one day (and how you can shift from AI conversation to orchestration)

There’s a difference between using AI and orchestrating it.

Most people using AI today treat it like an answer engine: They open a chat window, ask a question, get a response. Maybe tweak the prompt. Maybe don't like the answer, complain that the tech “isn’t quite there yet.”

On a Wednesday, I produced a ~10,000-word, 25-page research report. It included interviews with subject matter experts, industry reports, case studies, and statistics. And it needed to reflect a format and brand tone I’d never written before.

Five years ago, this would have taken me at least five business days. Probably closer to ten. Instead, it took one.

Not because I found a magic prompt. But because I approached the work differently.

Below is what I did, but more importantly, how I approached it.

I share this (somewhat exhaustive) recap for a couple reasons:

- To show how accessible this is, for around $50 in software.

- To illustrate how to approach large, complex projects with AI.

Maybe you don’t need to write a research report. But if you have a big project to complete or a huge problem to solve (and I’m sure you do), here’s how to think about that work differently.

Step 1: Providing Context

Before Claude.

Before Gemini.

Before drafting a single paragraph.

I created a folder on my desktop.

Inside it, I placed:

- The strategy brief for the report

- The working outline

- Sample reports with a similar tone and structure

- Transcripts of interviews with our subject matter experts

- A few research reports I'd downloaded (then later, a few more AI-generated research reports)

By the time I was done, I had roughly 30 PDFs compiled.

This may sound tedious. But it’s also where most of the leverage comes from.

The difference between a mediocre AI output and high-quality one is almost always context. Most people skip this, then blame the model.

Doing Supplemental Research (Gemini Deep Research)

Then I reviewed the outline again and looked for any gaps. What additional context (ideas, themes, examples, data) might AI need to write this report?

I used Gemini; specifically Deep Research, which you can easily access under "Tools." I described the report (audience, structure, goals) and asked Gemini to generate Deep Research prompts for specific sections of my outline.

Again: I didn’t ask it to write the report. I didn't even ask it to do the research yet.

I asked it to write the research prompt.

Instead of jumping to outputs, ask AI to help you design better inputs.

For each major section of the outline, I ran Deep Research, downloading each report as a PDF and adding them to that desktop folder. By the time I was done, I had about eight additional documents.

Step 2: Building the Writing Engine

Why I Used a Reasoning Model

For drafting, I used Claude Opus 4.6, Anthropic’s newest reasoning model. It’s exceptional. It can handle tons of context, maintains coherence across sections, and reasons thoughtfully.

But this article isn’t about “why you should use Claude.”

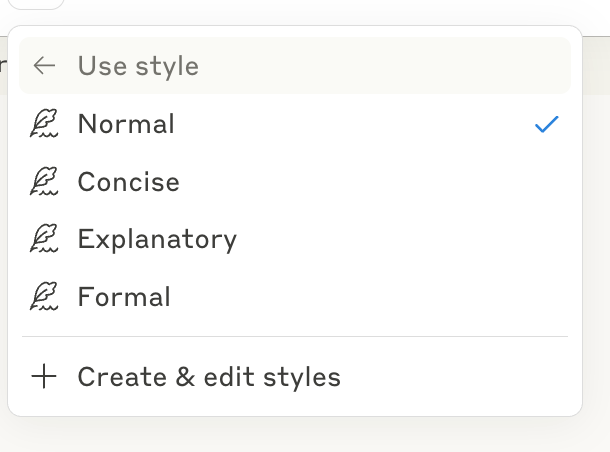

You could do something similar with ChatGPT, Gemini, a custom GPT, or a Gem. What matters more than brand is capability.

For work like this, I strongly recommend a reasoning model: something designed to pause, think, evaluate, not just autocomplete. (In your tool of choice, look for an option like "Thinking.")

Creating a Project Workspace

Inside a Claude Project, I uploaded all 30 PDFs. Again, that gave it access to:

- Interview transcripts

- Reports full of research, case studies, and statistics

- Examples of format, structure, and brand tone

- Strategic context

I wasn’t opening a blank chat window and hoping for brilliance. I tried to give it everything it needed to succeed, right from the beginning.

Too often, people try to solve quality issues at the prompt level. In my experience, most quality problems are upstream. They’re architecture problems, not wording problems.

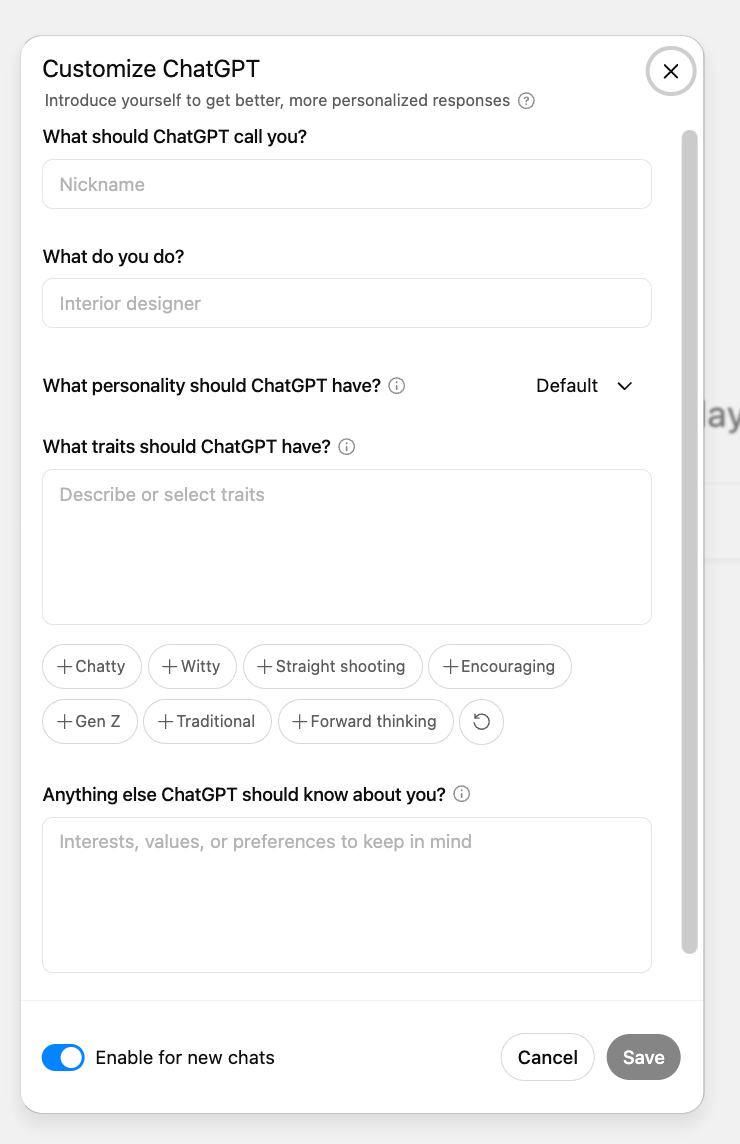

Providing Clear Instructions

Now it was time to write the instructions for this project.

I brain-dumped everything I would tell a junior colleague if I were delegating this report:

- What this report is

- Who it’s for

- What files I'd uploaded and why

If I were handing all those files over to a colleague to complete this project, this part is the email I'd send them with the high-level context. Look at X pdf for the outline; mirror the tone and format of Y; refer to the other files for background information and research.

Note that I largely did this using (again, AI-powered) dictation, because we typically speak 4x faster than we type.

So I just talked until I felt I'd shared enough, then asked AI to turn it into the instructions for the Claude Project. (This could also be instructions for a custom GPT or a Gem, or simply a thorough prompt. Don't overthink this.)

This made the drafting phase dramatically easier. The model wasn’t guessing about tone or priorities; it understood the hierarchy of sources and the standards it was expected to meet.

Step 3: Drafting the Report

Approaching It Section by Section (Why I Didn’t One-Shot 10,000 Words)

I could have attempted to one-shot the entire report. (I successfully one-shotted a 7,000-word script recently, again using Opus 4.6.) But speed wasn’t the primary goal here; quality was.

Instead, I worked section by section. For each section:

- Claude drafted it using alllllll the information I'd provided.

- I read it, jotting down my feedback in a separate window. No tips, tricks, or clever prompts here — just clear, specific feedback like I'd share with a teammate.

- I replied with all my feedback on that section at once.

- Claude revised the section based on my notes.

That was it. A single revision round, roughly 1,000-2,000 words at a time.

With rich context, clear instructions, and a reasoning model, far less back-and-forth is needed.

And because everything lived in the same project thread, the model retained awareness of previous sections.

It could refer back to earlier arguments, maintain thematic continuity, and build transitions that made the final document feel cohesive rather than stitched together.

Step 4: Verification

After copying and pasting each section into a Google Doc, I shifted to verification.

Because even with an advanced reasoning model and loads of context, I was not going to publish something I had not read and fact-checked.

This is where NotebookLM was indispensable.

I uploaded the same documents into a NotebookLM notebook. (See? I told you that desktop folder would be useful.)

Then, as I read through the 25-page draft, every time I encountered a statistic, quote, or claim, I copied it into NotebookLM and asked:

What sources support this?

NotebookLM scanned the uploaded documents (all 30 sources) and returned responses with citations and footnotes.

If I wanted a different quote, stat, or case study, I could simply ask. It would scan the transcripts and surface relevant excerpts.

Verification, which would normally require manually searching through hundreds of pages, became nearly instantaneous.

Step 5: Orchestrating AI

One of the most powerful aspects of this process wasn’t any single tool. It was the ability to work in parallel.

While I was waiting on a Deep Research report, I might review a section of copy. While Claude was drafting a section, I might be verifying statistics in NotebookLM.

I moved fluidly between tools, open in separate browser tabs, and using each for what it does best:

- A reasoning model for synthesis and writing.

- A deep research tool for surfacing themes, examples, and supporting evidence.

- NotebookLM for fact-checking and validation.

I wasn’t searching for a single, perfect tool. I was orchestrating a collection of specialized tools.

Bottom Line: What Actually Saved Me So Much Time

The secret wasn’t a perfect new model. And it wasn’t clever prompting tricks.

It was:

- Approaching the project intentionally

- Aggregating context upfront

- Providing clear expectations and feedback

- Using AI to improve prompts and instructions

- Dictating and transcribing whenever possible

- Pulling in reasoning models, deep research tools, and source grounding strategically

- Working across multiple tools and models in parallel

Five years ago, this report would have required days or weeks of manual research, synthesis, cross-referencing, and painstaking editing.

Now, the constraint isn’t time or effort. It’s discernment: critical thinking, judgment, taste. It’s knowing what AI does best, and what only I can do.

Ultimately, that’s how you pivot from conversation to orchestration.

p.s. Co-written by AI, too.